🧠 AuditSec Intel™ 1064 – “The Shadow Automation Threat: When AI Agents Acted Faster Than Governance in 2025”

🔍 Introduction — The Action Nobody Approved

In 2025 breach and failure investigations, CISORadar identified a new pattern:

No malware.

No compromised user.

No malicious intent.

Just automation acting on trust.

AI agents, scripts, bots, and automated workflows executed actions:

- Without approvals

- Without logging

- Without rollback

- Without accountability

CISORadar calls this: The Shadow Automation Threat.

⚠️ 2025 Reality — Automation Without Authority

| Automation Type | Trigger | Governance Gap | Impact |

|---|---|---|---|

| AI remediation bot | Alert threshold | No human-in-loop | Production outage |

| SOAR playbook | False positive | No approval gate | Data deletion |

| Cloud auto-scaler | Cost signal | Excessive permissions | Resource exposure |

| IAM auto-provisioning | HR sync | Logic error | Privilege escalation |

| DevOps script | CI failure | No change control | Security controls disabled |

CISORadar Insight:

“Automation didn’t fail —

governance never existed.”

🧩 Ignored Control: ISO 27001 A.5.37 / A.8.9 / NIST SI-7, CM-3 — Automated Action Governance

| Control Area | Objective | Common Failure |

|---|---|---|

| Automation Inventory | Know what runs | Shadow scripts |

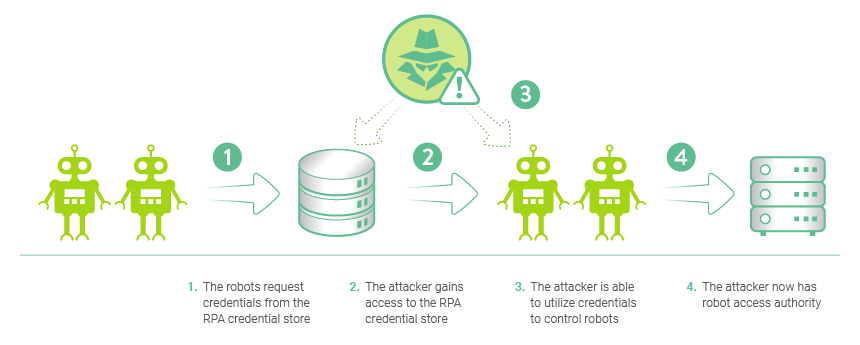

| Authority Model | Define what can act | Over-privileged bots |

| Approval Gates | Human validation | Full autonomy |

| Logging | Trace automated actions | No audit trail |

| Rollback | Reverse bad actions | One-way execution |

| Segregation | Separate dev/prod | Same permissions |

💬 CISORadar Observation:

“Organizations audited people —

but trusted machines blindly.”

🧠 CISORadar Control Test of the Week

Control Reference: ISO 27001 A.5.37 / NIST SI-7

Objective: Ensure automation cannot act beyond its mandate.

🔍 Test Steps

1️⃣ Inventory all automated workflows, bots, and agents.

2️⃣ Identify actions executed without approval.

3️⃣ Review permissions assigned to automation identities.

4️⃣ Validate approval and rollback mechanisms.

5️⃣ Test false-positive automation scenarios.

6️⃣ Review automation logs and traceability.

7️⃣ Simulate AI-agent misclassification.

8️⃣ Calculate Automation Risk Index (ARI).

🔎 Expected Outcomes

✅ All automation inventoried

✅ Authority boundaries defined

✅ Human-in-loop enforced

✅ Full audit logs present

✅ Rollback tested

Tools Suggested:

SOAR | Workflow Engines | IAM for Bots | Change Mgmt | CISORadar Automation Control Lens

🧨 Real Case: “The Bot That Took Down Production”

An AI remediation bot detected “anomalous traffic”.

It disabled firewall rules.

The traffic was legitimate.

Downtime: 14 hours

Loss: ₹620 Crore

Lesson:

“Automation scales mistakes faster than humans ever could.”

🚀 CISORadar Impact Model – Automation Risk Index (ARI)

| Metric | Before CISORadar | After CISORadar |

|---|---|---|

| Shadow Automation | Widespread | Eliminated |

| Approval Gates | Rare | Mandatory |

| Bot Permissions | Over-scoped | Least privilege |

| Automation Logging | Partial | Complete |

| AI Incident Risk | High | Controlled |

🧭 Leadership Takeaway

“AI-era security fails

when actions outpace authority.”

Boards must ask:

👉 What automated actions exist today?

👉 Who approved them?

👉 Can we stop them instantly?

👉 Can we prove what they did?

CISORadar ensures automation earns trust — not assumes it.

📩 Download

Automation Governance Audit Checklist + ARI Scorecard

(ISO 27001 / NIST)

Available inside the CISORadar Cyber Authority Community.

🔖 SEO Tags

#AuditSecIntel #AutomationRisk #AI Governance #SOAR #ISO27001 #NISTSI7 #CISORadar #DigitalTrust #AIControls #CyberGovernance